Fourier series clearly open the frequency domain as an interesting and useful way of determining how circuits and systems respond to periodic input signals. Can we use similar techniques for nonperiodic signals? What is the response of the filter to a single pulse? Addressing these issues requires us to find the Fourier spectrum of all signals, both periodic and nonperiodic ones. We need a Definition for the Fourier spectrum of a signal, periodic or not. This spectrum is calculated by what is known as the Fourier transform.

Let sT (t) be a periodic signal having period T. We want to consider what happens to this signal's spectrum as we let the period become longer and longer. We denote the spectrum for any assumed value of the period by ck (T). We calculate the spectrum according to the familiar formula

where we have used a symmetric placement of the integration interval about the origin for subsequent deriva tional convenience. Let f be a fxed frequency equaling Tk ; we vary the frequency index k proportionally as we increase the period. Define

making the corresponding Fourier series

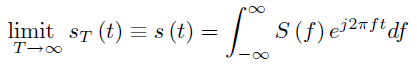

As the period increases, the spectral lines become closer together, becoming a continuum. Therefore,

with

S (f) is the Fourier transform of s (t) (the Fourier transform is symbolically denoted by the uppercase version of the signal's symbol) and is defined for any signal for which the integral ((4.33)) converges.

Example 4.4

Let's calculate the Fourier transform of the pulse signal (Section 2.2.5: Pulse), p(t).

Note how closely this result resembles the expression for Fourier series coefficients of the Figure 4.10.

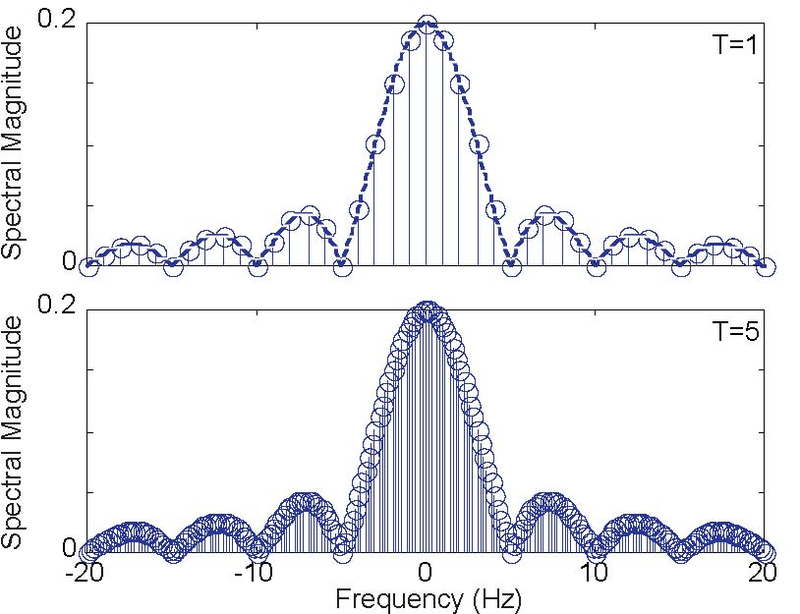

Figure 4.11 (Spectrum) shows how increasing the period does indeed lead to a continuum of coefficients, and that the Fourier transform does correspond to what the continuum becomes. The

quantity  has a special name, the sinc

(pronounced "sink") function, and is denoted by sinc (t). Thus, the magnitude of the pulse's Fourier transform equals |Δsinc (πfΔ) |.

has a special name, the sinc

(pronounced "sink") function, and is denoted by sinc (t). Thus, the magnitude of the pulse's Fourier transform equals |Δsinc (πfΔ) |.

The Fourier transform relates a signal's time and frequency domain representations to each other. The direct Fourier transform (or simply the Fourier transform) calculates a signal's frequency domain representation from its time-domain variant ((4.34)). The inverse Fourier transform finds the time-domain representation from the frequency domain. Rather than explicitly writing the required integral, we often symbolically express these transform calculations as F (s) and F−1 (S), respectively.

We must have s (t)= F−1 (F (s (t))) and S (f)= F(F−1 (S (f)), and these results are indeed valid with minor exceptions.

Showing that you "get back to where you started" is difficult from an analytic viewpoint, and we won't try here. Note that the direct and inverse transforms differ only in the sign of the exponent.

Exercise 4.8.1

The differing exponent signs means that some curious results occur when we use the wrong sign. What is F (S (f))? In other words, use the wrong exponent sign in evaluating the inverse Fourier transform.

Properties of the Fourier transform and some useful transform pairs are provided in the accompanying tables (Table 4.1 and Table 4.2). Especially important among these properties is Parseval's Theorem, which states that power computed in either domain equals the power in the other.

Of practical importance is the conjugate symmetry property: When s (t) is real-valued, the spectrum at negative frequencies equals the complex conjugate of the spectrum at the corresponding positive frequencies. Consequently, we need only plot the positive frequency portion of the spectrum (we can easily determine the remainder of the spectrum).

Exercise 4.8.2

How many Fourier transform operations need to be applied to get the original signal back:

Note that the mathematical relationships between the time domain and frequency domain versions of the same signal are termed transforms. We are transforming (in the nontechnical meaning of the word) a signal from one representation to another. We express Fourier transform pairs as (s (t) ↔ S (f)). A signal's time and frequency domain representations are uniquely related to each other. A signal thus "exists" in both the time and frequency domains, with the Fourier transform bridging between the two. We can define an information carrying signal in either the time or frequency domains; it behooves the wise engineer to use the simpler of the two.

A common misunderstanding is that while a signal exists in both the time and frequency domains, a single formula expressing a signal must contain only time or frequency: Both cannot be present simultaneously. This situation mirrors what happens with complex amplitudes in circuits: As we reveal how communications systems work and are designed, we will define signals entirely in the frequency domain without explicitly finding their time domain variants. This idea is shown in another module (Section 4.6) where we define Fourier series coefficients according to letter to be transmitted. Thus, a signal, though most familiarly defined in the time-domain, really can be defined equally as well (and sometimes more easily) in the frequency domain. For example, impedances depend on frequency and the time variable cannot appear.

We will learn (Section 4.9) that finding a linear, time-invariant system's output in the time domain can be most easily calculated by determining the input signal's spectrum, performing a simple calculation in the frequency domain, and inverse transforming the result. Furthermore, understanding communications and information processing systems requires a thorough understanding of signal structure and of how systems work in both the time and frequency domains.

The only difficulty in calculating the Fourier transform of any signal occurs when we have periodic signals (in either domain). Realizing that the Fourier series is a special case of the Fourier transform, we simply calculate the Fourier series coefficients instead, and plot them along with the spectra of nonperiodic signals on the same frequency axis.

|

|

|

|

|

|

|

|

|

|

| Time-Domain | Frequency Domain | |

|---|---|---|

| Linearity |

|

|

| Conjugate Symmetry |

|

|

| Even Symmetry |

|

|

| Odd Symmetry |

|

|

| Scale Change |

|

|

| Time Delay |

|

|

| Complex Modulation |

|

|

| Amplitude Modulation by Cosine |

|

|

| Amplitude Modulation by Sine |

|

|

| Differentiation |

|

|

| Multiplication by t |

|

|

| Area |

|

|

| Value at Origin |

|

|

| Parseval"s Theorem |

|

|

Example 4.5

In communications, a very important operation on a signal s (t) is to amplitude modulate it. Using this operation more as an example rather than elaborating the

communications aspects here, we want to compute the Fourier transform the spectrum of

Thus,

For the spectrum of cos (2πfct), we

use the Fourier series. Its period is  and its only nonzero Fourier

coefficients are

and its only nonzero Fourier

coefficients are  The second term is not periodic unless s (t) has the same period as the sinusoid. Using Euler's relation, the spectrum

of the second term can be derived as

The second term is not periodic unless s (t) has the same period as the sinusoid. Using Euler's relation, the spectrum

of the second term can be derived as

Using Euler's relation for the cosine,

Exploiting the uniqueness property of the Fourier transform, we have

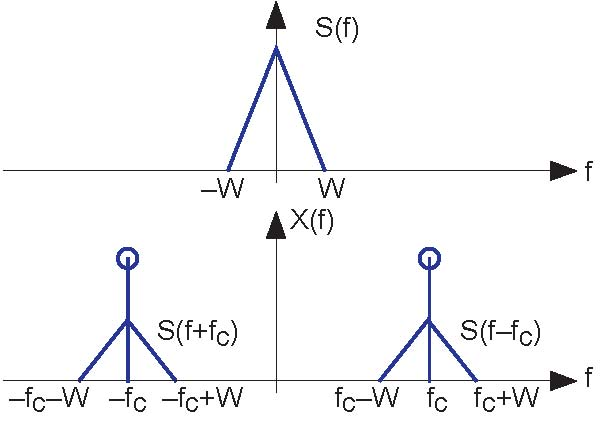

This component of the spectrum consists of the original signal's spectrum delayed and advanced in frequency. The spectrum of the amplitude modulated signal is shown in Figure 4.12.

Note how in this figure the signal s (t) is defined in the frequency domain. To find its time domain representation, we simply use the inverse Fourier transform.

Exercise 4.8.3

What is the signal s (t) that corresponds to the spectrum shown in the upper panel of Figure 4.12?

Exercise 4.8.4

What is the power in x (t), the amplitude-modulated signal? Try the calculation in both the time and frequency domains.

In this example, we call the signal s (t) a baseband signal because its power is contained at low frequencies. Signals such as speech and the Dow Jones averages are baseband signals. The baseband signal's bandwidth equals W , the highest frequency at which it has power. Since x (t)'s spectrum is confned to a frequency band not close to the origin (we assume fc» W ) , we have a bandpass signal. The bandwidth of a bandpasssignal is not its highest frequency, but the range of positive frequencies where the signal has power. Thus, in this example, the bandwidth is 2W Hz. Why a signal's bandwidth should depend on its spectral shape will become clear once we develop communications systems.

- 9876 reads